Today I set up a small stack on my own server using Docker, Cloudflare Tunnel, and Traefik.

The goal was simple: I wanted a cleaner way to expose and manage multiple projects without treating every new deployment like a one-off configuration job.

The useful part was not just that it worked. It made the server feel like a reusable system.

Why I wanted this

I like keeping small projects available online, but once there is more than one service involved, setup work starts repeating itself very quickly.

You need routing, domain handling, HTTPS, container management, and some way to stop the server from becoming a pile of unrelated manual decisions.

I wanted something that felt structured enough to grow, but still lightweight enough for personal projects.

The stack

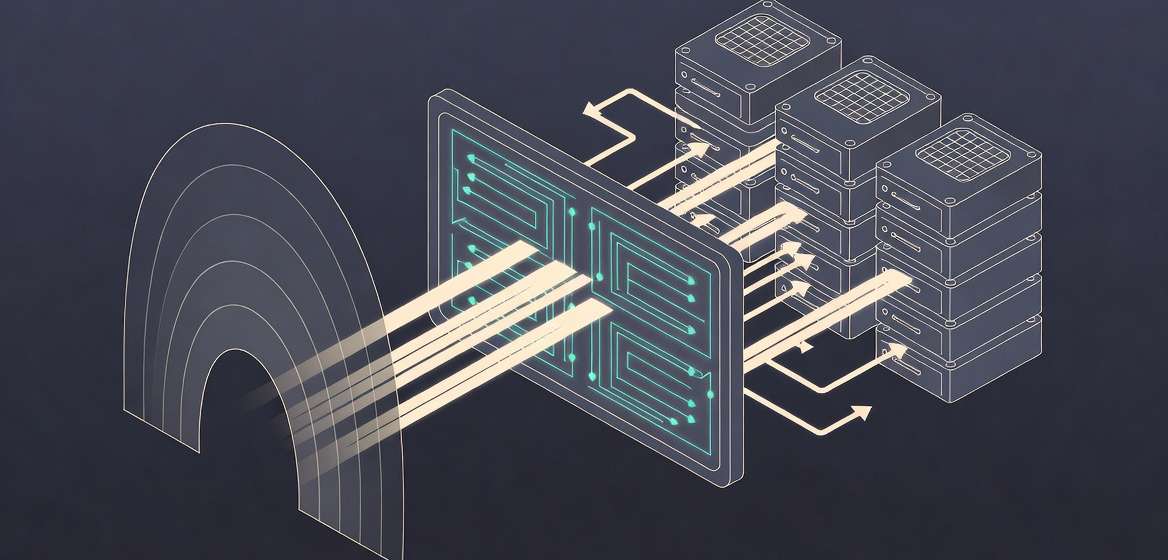

- Docker runs the services

- Traefik handles reverse proxying and routing

- Cloudflare Tunnel connects the server to the outside world without the usual direct exposure model

Docker gives each project a predictable runtime shape. Traefik gives those projects a consistent entry point. Cloudflare Tunnel removes a lot of the usual friction around exposing services safely.

What makes the stack work is that the responsibilities are separated in a sensible way. Containers are responsible for running applications. Traefik is responsible for routing and service discovery. The tunnel is responsible for controlled external access. Once those boundaries are clear, the setup becomes easier to extend without each layer leaking too much into the others.

Why not just keep it simpler?

That is a fair question, because for one app, a simpler setup is often good enough. A straightforward reverse proxy config is not a problem when there is only one destination behind it.

The problem starts when the server stops being “the place where one app runs” and becomes “the place where different projects may come and go over time.”

That is the point where I stop caring only about whether something works and start caring more about whether the structure will still feel reasonable after the fourth or fifth service.

Where this starts to make sense

With a single app, almost any setup can feel acceptable. A manual proxy config does not look too bad when there is only one target. But once there are multiple services, different hostnames, and the possibility of adding more over time, the difference becomes obvious.

That is the point where Traefik stops feeling optional. It becomes the layer that gives the server a stable structure. Services become easier to reason about, routing stops feeling ad hoc, and adding another project starts to look like a repeatable step instead of a fresh configuration job.

The most useful part was reduction in repeated decisions.

- services run in containers

- routing is handled in one consistent way

- external access follows the same pattern

Adding another project no longer looks like “what do I need to invent for this one?” It looks more like “how does this fit into the structure that already exists?”

That is a much better place to be, especially for side projects and internal tools.

What adding a new project looks like

At a high level, adding a new project comes down to two things:

- add a hostname rule in the Cloudflare Tunnel dashboard

- add Traefik labels to the project’s

docker-compose.yaml

On the Cloudflare side, the rule is straightforward: point something like test.semiherdogan.net to the Traefik entry point behind the tunnel.

On the container side, the project can stay very small:

services:

app:

build: .

restart: unless-stopped

labels:

- traefik.enable=true

- traefik.http.routers.test.rule=Host(`test.semiherdogan.net`)

- traefik.http.routers.test.entrypoints=web

- traefik.http.services.test.loadbalancer.server.port=80

networks:

- proxy

networks:

proxy:

external: true

The required shape is easy to understand:

- join the shared proxy network

- declare the hostname in Traefik

- let the tunnel rule send traffic to that entry point

That repeatability matters more than the individual labels themselves. Once the naming and network pattern are stable, the cost of adding another service drops a lot because the shape of the problem is already known.

Cloudflare Tunnel also changes the order of concerns in a useful way. Instead of thinking first about direct exposure, port handling, and the outer edge of the server, I can focus more on internal service organization and routing.

That does not remove the need to think carefully, but it does remove some of the repetitive edge-work that usually makes self-hosting feel more fragile than it needs to be.

It also gives me an easy path for the cases where a domain, subdomain, or internal endpoint should not be openly reachable. In those cases, Cloudflare Zero Trust fits naturally into the same setup and makes it straightforward to put a protected layer in front of something without rethinking the whole deployment model.

It is easier to extend, easier to keep mentally organized, and much nicer to work with than a collection of separate, hand-made deployment paths.

The interesting part is not the individual tools. It is that together they create a setup where future projects feel cheaper to add.

And that is exactly the kind of infrastructure decision I tend to like: not flashy, but immediately useful once the number of projects starts growing.

Where I would be careful

The main risk in setups like this is not usually the first deployment. It is slow operational drift.

If hostnames, labels, networks, and entry points are not kept consistent, the “clean structure” benefit disappears surprisingly fast. The same is true if observability is left behind. Once multiple services share the same routing layer, it becomes more important to know what is failing, where requests are going, and which layer is actually responsible when something breaks.

That is also why I like this stack more as a pattern than as a one-time setup. The value is not just that the first app is online. The value is that the second, third, and fourth app can follow the same rules without the server turning back into improvisation.

Where this is probably too much

If you only have one small app and do not expect that to change, this may be more structure than you actually need. In that case, a simpler setup might be the better engineering decision.

What makes this stack appealing is not that it is universally better. It is that it starts paying off when you want a server to host multiple projects without every new service feeling like a fresh round of setup decisions.